Andromeda can push scoped metrics directly to Grafana Cloud, VictoriaMetrics, or any Prometheus-compatible remote-write endpoint.Documentation Index

Fetch the complete documentation index at: https://docs.andromeda.ai/llms.txt

Use this file to discover all available pages before exploring further.

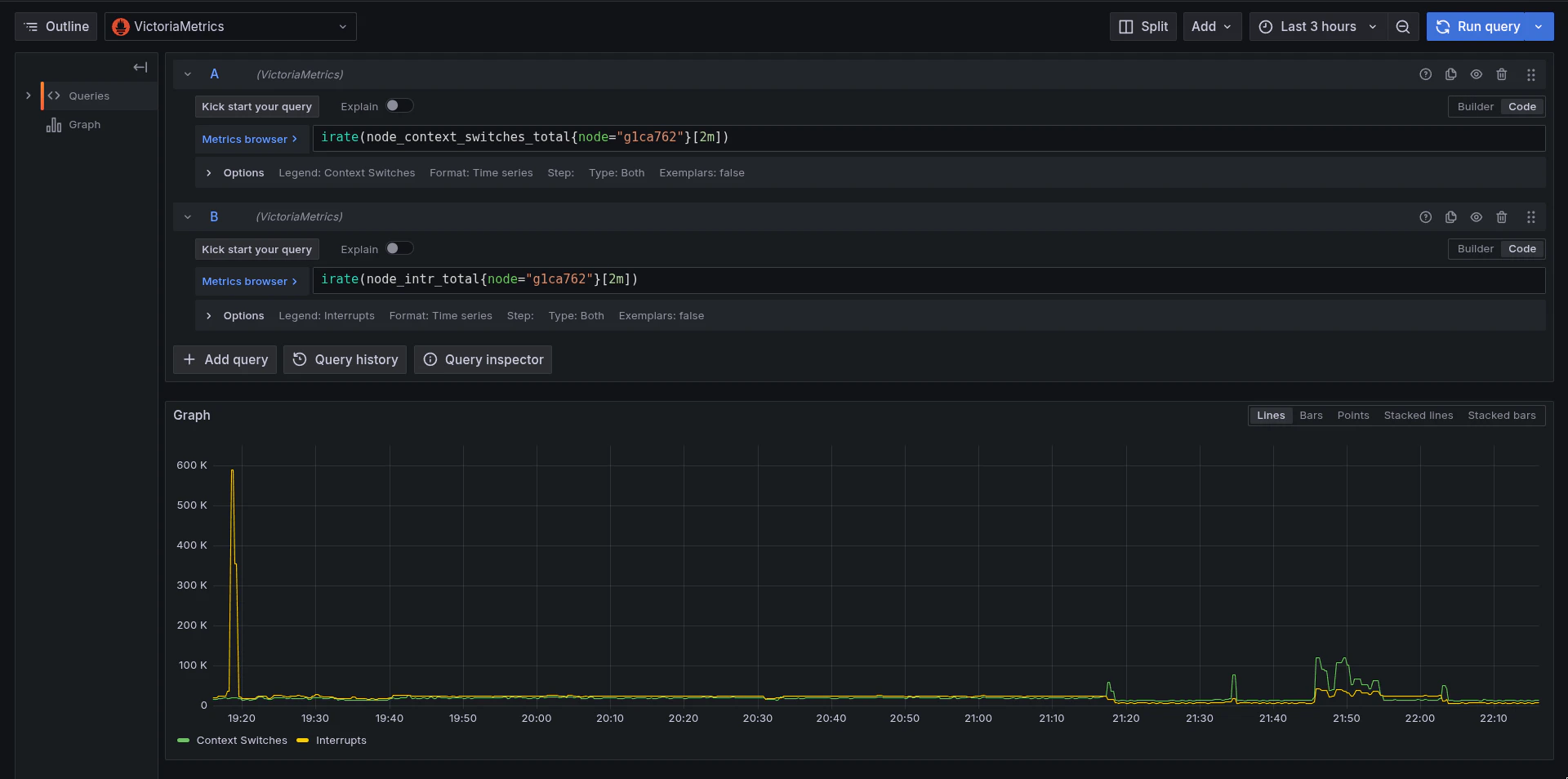

What gets pushed

The feed is filtered to your assigned nodes and namespaces. Typically this includes:- All GPU metrics (

DCGM_FI_*) - Container CPU and memory

- Slurm job allocation metrics

- Node metrics for assigned nodes

Set up remote write

- Share the remote-write endpoint URL and authentication method securely with Andromeda Support.

- Specify which metric categories are needed: GPU, container, Slurm, node, or all.

- Andromeda configures and deploys the integration.

- Metrics begin flowing within minutes.

Expectations

Remote-write volume and downstream cost depend on the selected metric categories. Estimate ingest rate before enabling broad GPU, container, Slurm, and node metric sets.

- Latency: ~15-30 second cadence, matching scrape intervals.

- Volume: Roughly 1-5K samples/sec per 8-GPU node depending on metric selection. Verify the endpoint can handle the ingest rate.

- Labels: Metrics arrive with original labels (

cluster,namespace,node,pod,gpu, etc.). Relabeling can be applied on the receiving side. - Cost: If the endpoint charges per series or per sample, estimate from the Metrics Reference. GPU metrics alone produce ~18 series x 8 GPUs = 144 series per node.